For years, cosmologists have argued over a simple question with an awkward answer: How fast is the universe expanding right now?

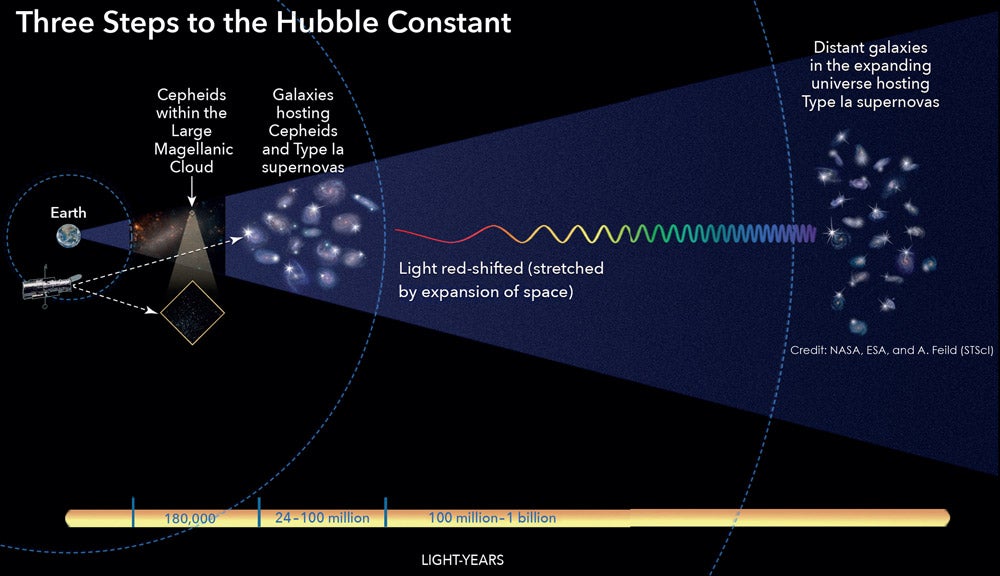

The expansion rate, called the Hubble constant, ought to come out the same no matter how you measure it. Yet it does not. Measurements tied to the early universe, using relic signals like the cosmic microwave background, land in the high 60s when expressed in kilometers per second per megaparsec.

Late-universe measurements, often anchored to exploding stars and the cosmic distance ladder, tend to land higher, in the low-to-mid 70s. The mismatch is now described as being in more than 5-sigma conflict, a level that keeps theorists awake at night. The problem has a name that has become almost too tidy for its stakes: the Hubble tension.

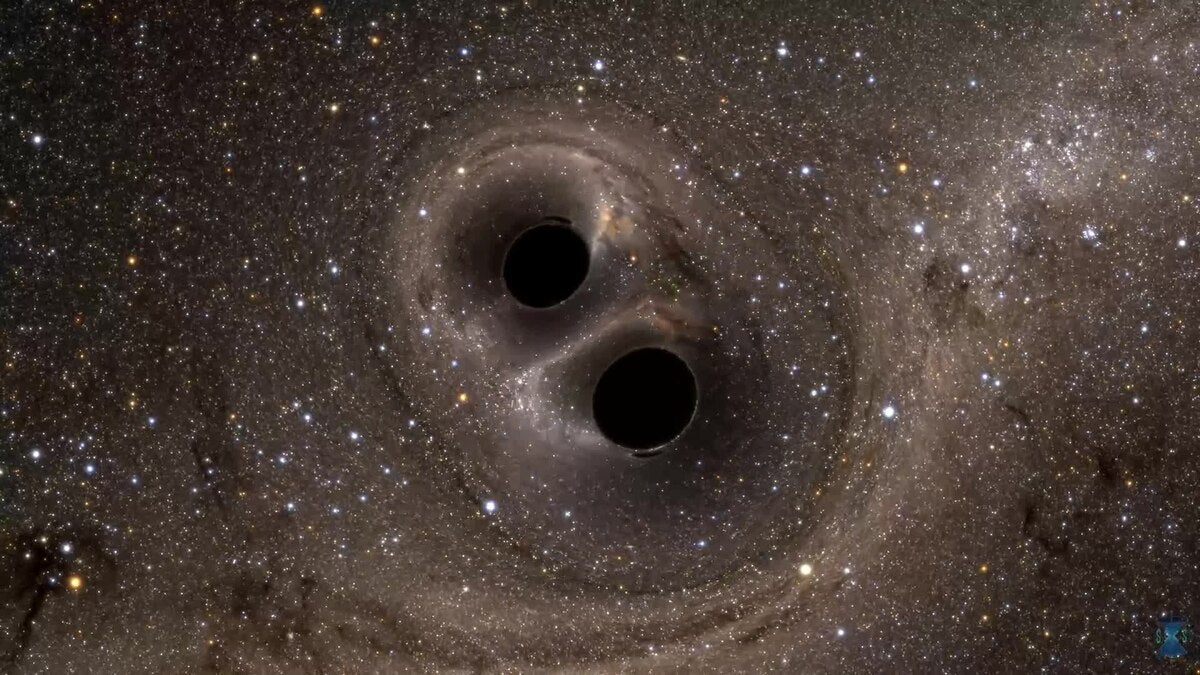

A research team based at The Grainger College of Engineering at the University of Illinois Urbana-Champaign and the University of Chicago thinks a faint, persistent “hum” of gravitational waves could help bring a new kind of clarity. They call their approach a “stochastic siren,” and they report what they describe as the first measurement of the Hubble constant that uses the stochastic gravitational-wave background from merging binary black holes.

“It’s important to obtain an independent measurement of the Hubble constant to resolve the current Hubble tension,” said Illinois Physics Professor Nicolás Yunes, one of the authors. “Our method is an innovative way to enhance the accuracy of Hubble constant inferences using gravitational waves.”

The paper, led by Illinois physics graduate student Bryce Cousins, has been accepted for publication in Physical Review Letters. The author list also includes Illinois graduate student Kristen Schumacher, Illinois postdoctoral researcher Ka-wai Adrian Chung, and University of Chicago postdoctoral researchers Colm Talbot and Thomas Callister. Co-author Daniel Holz, a professor of physics and of astronomy and astrophysics at the University of Chicago, framed the work as a shift in how cosmology can be done.

“It’s not every day that you come up with an entirely new tool for cosmology,” Holz said. “We show that by using the background gravitational-wave hum from merging black holes in distant galaxies, we can learn about the age and composition of the universe.”

The Hubble constant is meant to describe the present-day expansion rate of the universe. In practice, it has become a stress test for the standard picture of cosmology.

Late-universe probes, including type Ia supernovae and the cosmic distance ladder, gravitationally lensed systems, and cosmic chronometers, tend to favor higher values, about 72 to 74 km/s/Mpc. Early-universe probes, including the cosmic microwave background, baryon acoustic oscillations, and the inverse distance ladder, point to lower values, about 67 to 68 km/s/Mpc. The disagreement sits at more than 5 sigma, and that gap has pushed researchers to consider whether the early universe behaved differently than assumed.

In the source material, several example ideas appear in the orbit of the tension: early dark energy, interactions between dark matter and neutrinos, and evolving dark-energy dynamics. Those are not minor tweaks. They imply changes to what the universe contains, or how it behaved, or both.

That is why independent measurements matter so much. If one method carries a hidden bias, a truly separate technique might expose it. Gravitational waves, in principle, offer that separation.

Gravitational waves are ripples in spacetime produced by violent events such as black hole mergers. A global network of detectors run by the LIGO-Virgo-KAGRA (LVK) Collaboration listens for these signals. When a merger is detected as a distinct event, the wave’s amplitude can provide a direct distance measurement without a traditional distance ladder.

But there is a catch. To turn that distance into a Hubble constant, you also need the event’s redshift, which is tied to how fast the source is receding due to cosmic expansion. Standard approaches get the redshift by finding light from the merger, or by identifying the host galaxy, or by statistical methods involving galaxy catalogs. Other approaches correlate galaxy distributions with merger events, or use neutron star physics such as tidal deformability.

The new study leans into a different idea: not the clear, individually detected events, but the unresolved ones that pile up into a background.

“Because we are observing individual black hole collisions, we can determine the rates of those collisions happening across the universe,” Cousins said. “Based on those rates, we expect there to be a lot more events that we can’t observe, which is called the gravitational-wave background.”

LVK searches for this gravitational-wave background by cross-correlating data from pairs of detectors, under common assumptions that the background is stationary, Gaussian, unpolarized, and isotropic. So far, the specific astrophysical background expected from stellar-mass mergers has not been detected, even as pulsar timing arrays have reported a recent possible detection of a background likely produced by supermassive black hole binaries.

That non-detection, the authors argue, is not a dead end. It is information.

Here is the central logic the team builds on. The strength of an astrophysical gravitational-wave background depends on how many mergers occurred over cosmic time and how they are spread across the available volume. That available volume depends on the universe’s expansion history.

In their formulation, a smaller Hubble constant corresponds to larger comoving volumes at a given redshift. For a fixed merger rate density, that means more mergers contribute to the background and the energy density in gravitational waves goes up. If the background is not detected, then values of the Hubble constant that would have produced an overly strong background become less plausible.

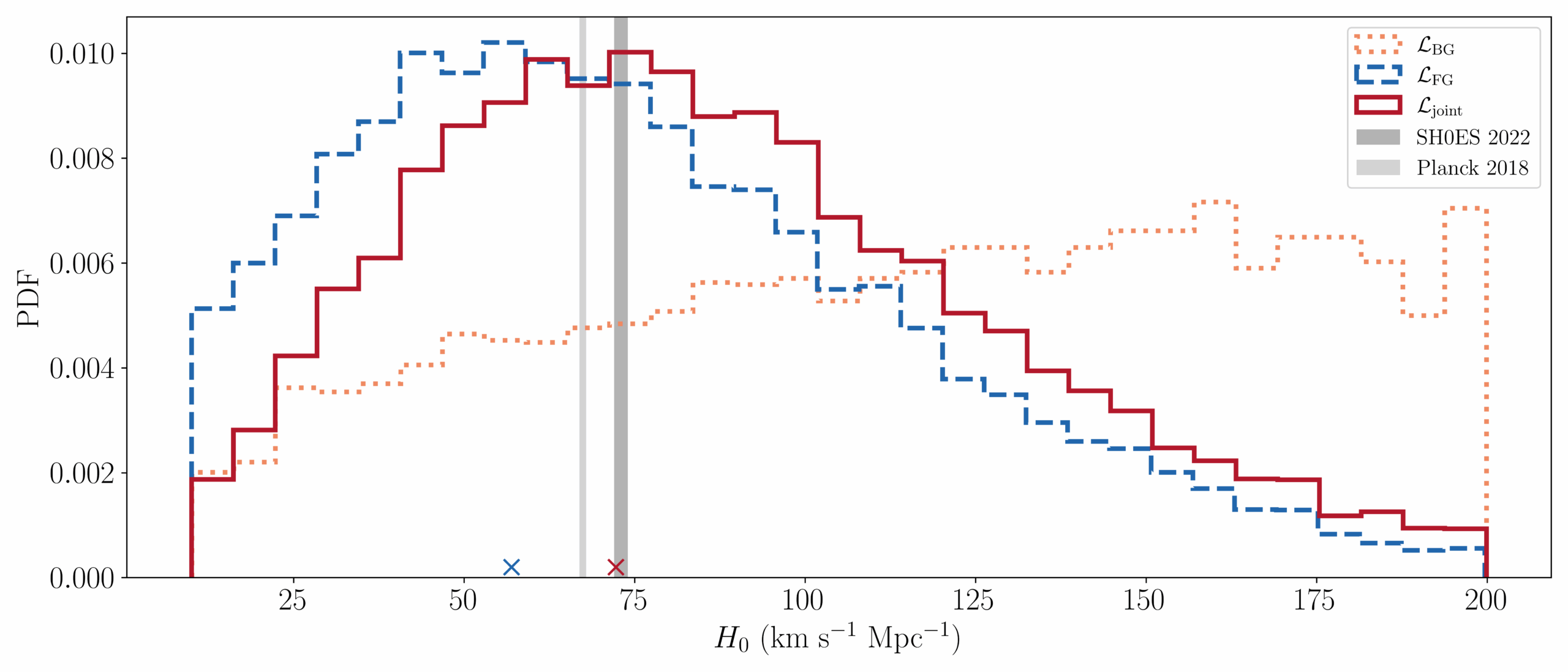

In other words, a continued non-detection pushes against the slow-expansion end of the Hubble constant range. The authors emphasize that this feature is unusual: the “stochastic siren” naturally raises the lower bound of the Hubble constant as limits on the background improve.

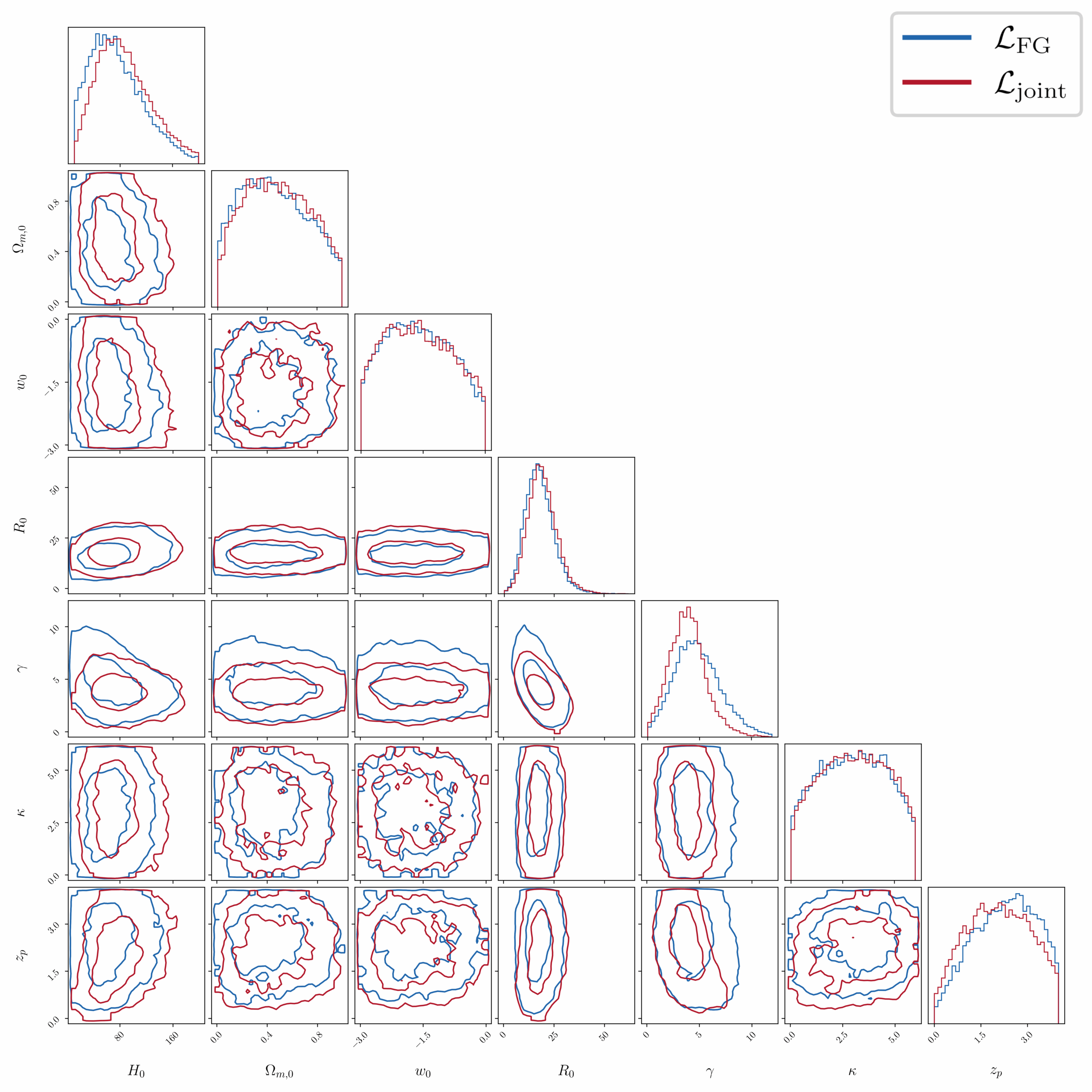

To test the idea, the team analyzed data from LVK’s first three observing runs. They combined two ingredients: the population inference from individually resolved binary black hole mergers, and the current non-detection of the background. They treated foreground and background data as independent, arguing the resolved mergers contribute only about 0.3 percent of the upper limits on the background for the dataset used, so the overlap is small enough to ignore.

The combined approach shifted and tightened their inferred Hubble constant compared with using resolved mergers alone. The joint measurement peaked at 72 km/s/Mpc, with a 68.3 percent highest density interval of minus 37 and plus 44. By comparison, their foreground-only result peaked at 57 km/s/Mpc, with minus 35 and plus 43. They also cite a recent LVK “spectral siren” measurement using 42 binary black hole candidates from the GWTC-3 catalog, which reported 46 km/s/Mpc with minus 26 and plus 49.

The big picture from these numbers is not that the uncertainty has vanished. It has not. The point is that folding the background constraint into the analysis changes what the gravitational-wave data prefer, and it does so using information that exists even before the background is detected.

The paper does not present the method as finished. It comes with choices and caveats.

For one, the authors model only binary black holes when computing the background contribution, even though the LVK background searches cover all merger types. They call their result conservative, noting that other merger classes can contribute comparably to the background and could strengthen the method, but only at the cost of more population modeling and more data.

They also neglect correlations between black hole mass and redshift. Some correlations could exist due to metallicity effects on progenitor stars and delays between formation and merger. The authors say recent work suggests such correlations are unlikely to be required for GWTC-3 binary black hole data, but they still flag it as a modeling choice.

Finally, they note that future detectors will likely observe a background that is not well described by the standard Gaussian, stationary assumptions. That would force changes in analysis. The assumption that foreground and background likelihoods can be treated as independent may also need revisiting once the catalog of individually detected mergers becomes much larger.

Still, the researchers see a practical near-term path. As observing runs continue, improved upper limits on the background should keep nudging the lower bound of the Hubble constant upward. The team also writes that the LVK detectors are expected to observe the gravitational-wave background in coming years, and that their projections suggest it could be detected with a signal-to-noise ratio of eight in less than a year using anticipated A# detector upgrades.

Research findings are available online in the journal Physical Review Letters.

The original story “Scientists develop a new way to measure the expansion rate of the universe” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post Scientists develop a new way to measure the expansion rate of the universe appeared first on The Brighter Side of News.