A person with advanced heart failure can look stable at a routine clinic visit. Their vitals may not alarm anyone. Their ultrasound might appear unremarkable to a quick review. The most revealing information only surfaces during a demanding exercise test that pushes the heart close to its limits, and that test, at most hospitals, is never ordered.

That diagnostic gap kills people.

Advanced heart failure affects roughly 200,000 Americans and carries a one-year survival rate below 50 percent, worse than many cancers. Yet fewer than 6,000 patients per year receive advanced treatments such as heart transplants or mechanical pumps. Part of the problem is access to treatment. But the failure often begins earlier, in the clinic, when the severity of a patient’s condition simply goes unrecognized.

A research team from Weill Cornell Medicine, Cornell Tech, Columbia University Vagelos College of Physicians and Surgeons, and NewYork-Presbyterian is now testing whether artificial intelligence can close that gap using tools that are already sitting in every hospital.

The standard for assessing advanced heart failure severity is cardiopulmonary exercise testing, a procedure that measures how much oxygen the body can consume during intense physical exertion. That value, called peak VO₂, gives physicians a reliable window into how hard the heart is actually working under stress and helps determine who needs urgent intervention.

The problem is logistical. The test requires specialized equipment, trained personnel, and protocols that most community hospitals don’t maintain. It belongs largely to major academic medical centers. Patients who never reach those centers, or who are never referred for the test, fall through without an accurate severity assessment.

“This opens up a promising pathway for more efficient assessment of patients with advanced heart failure using data sources that are already embedded in routine care,” said Dr. Fei Wang of Weill Cornell Medicine, the study’s senior author.

Their findings were published March 3 in npj Digital Medicine.

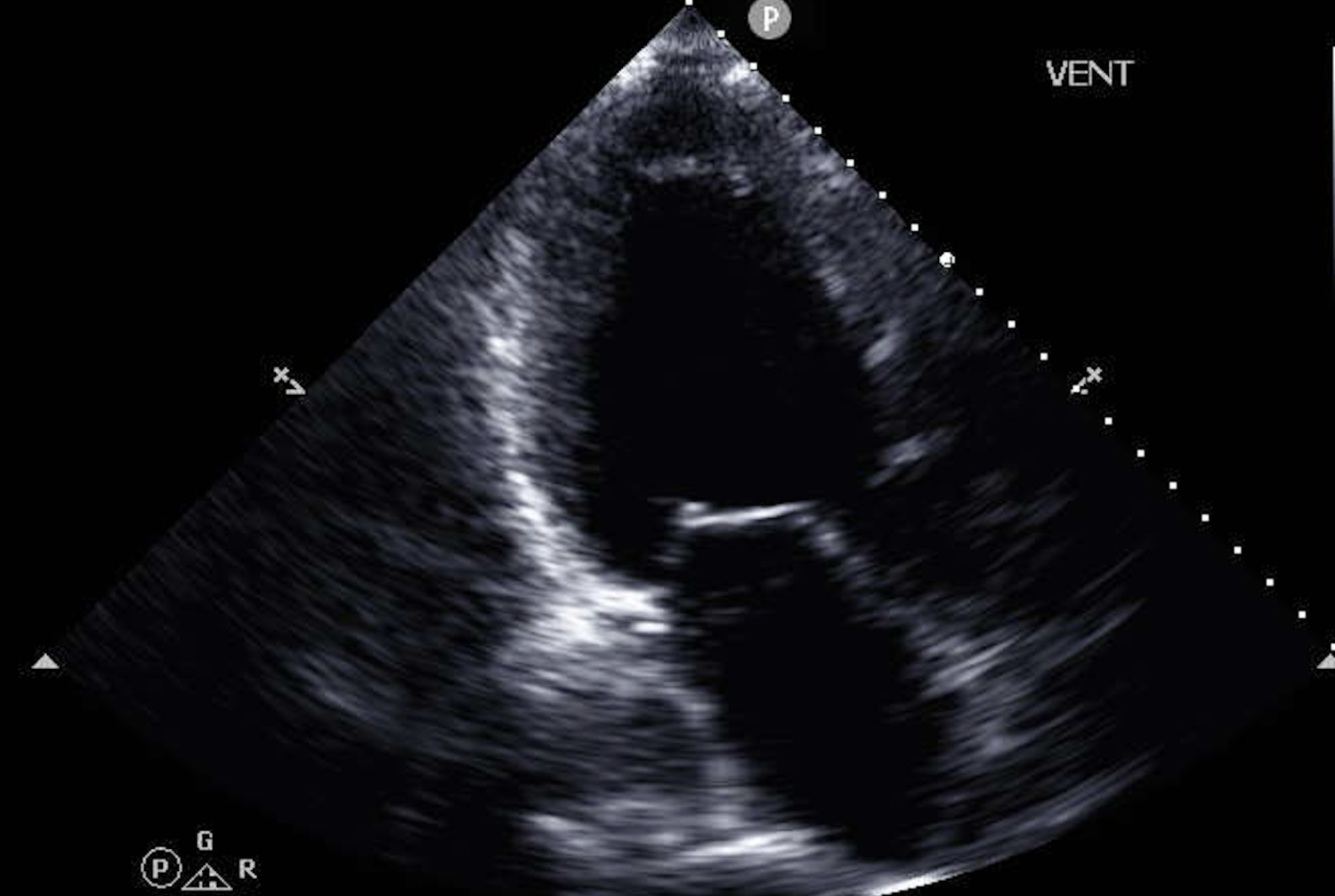

Heart ultrasounds, known as echocardiograms, are routine. Nearly every cardiology practice uses them. On their own, though, standard echocardiograms have not proven to be strong predictors of survival outcomes in heart failure, partly because the most important information is often buried across multiple image types rather than visible in any single measurement.

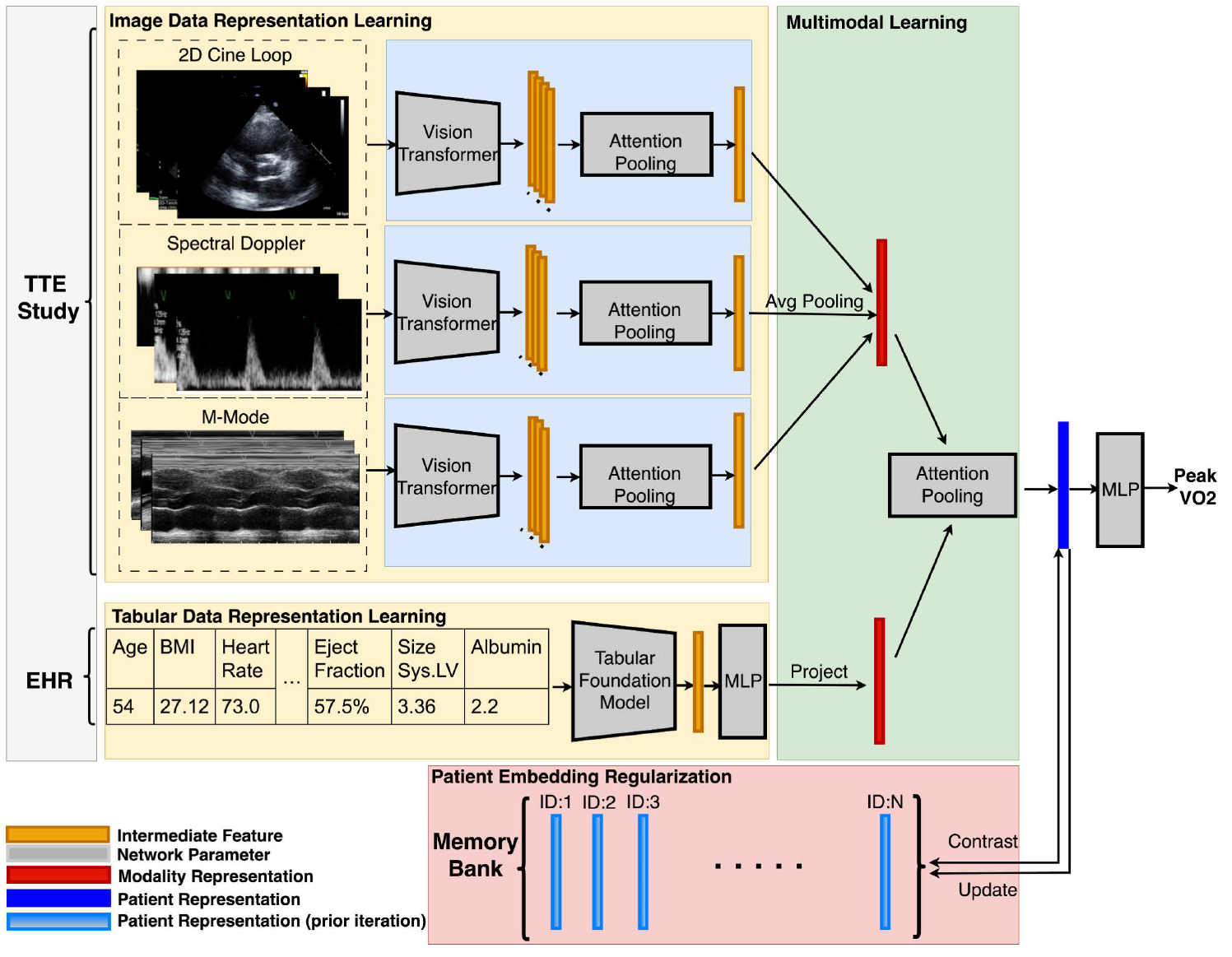

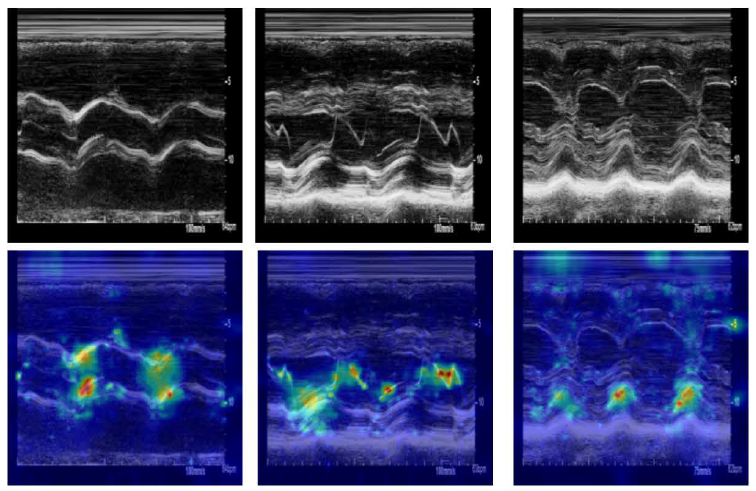

The team asked whether a machine learning model could do what human reviewers often can’t, which is to detect patterns across many simultaneous data streams and integrate them with clinical context. They built a system that analyzes several categories of ultrasound data together: moving video images of the heart’s chambers, valve motion patterns, and Doppler signals measuring blood flow. Those imaging inputs were combined with information pulled from electronic health records, including patient age, body mass index, and standard clinical measurements.

The model was trained on data from 1,000 patients treated at NewYork-Presbyterian/Columbia University Irving Medical Center, then tested on a separate group of 127 patients across three different hospitals, a critical design choice that forces the model to prove itself outside the environment where it was built.

Performance was strong. The system achieved roughly 85 percent accuracy in identifying high-risk patients, those with peak VO₂ below a clinically significant threshold. On the technical metric used to evaluate diagnostic tools across thresholds, the model scored 0.849 on the training hospitals and 0.870 on the external validation group, the latter figure being slightly better, which is unusual and encouraging.

“The close interaction between clinicians and AI researchers on this project ended up driving the development of new AI techniques that would not have been explored otherwise,” said Dr. Deborah Estrin of Cornell Tech.

The results come with real caveats that the authors describe directly.

Performance dropped in patients aged 60 and older. The researchers attribute this partly to smaller representation of older patients in the training data and partly to the greater clinical complexity of that group. Age-related changes in heart structure and function are harder to interpret, and the model appears to reflect that difficulty.

Accuracy also varied across racial groups and across imaging modalities. Spectral Doppler data, one of the ultrasound signal types included in the model, was particularly sensitive to differences between hospitals, suggesting that variation in how that data is collected or calibrated from site to site can affect predictions.

The study’s scope adds another limitation worth noting. All four hospitals involved are in the New York area, which may not reflect the imaging equipment, patient populations, or clinical practices at institutions elsewhere in the country or world. The external validation group, while geographically distinct from the training data, was relatively small at 127 patients.

Timing introduced a subtler problem. Ultrasound scans and exercise tests were not always performed at the same point in a patient’s care, meaning the model was sometimes predicting a value measured weeks or months before or after the imaging data it was analyzing.

None of these limitations are unique to this study, and they don’t undermine the core finding. The model consistently outperformed earlier approaches to estimating peak VO₂ from non-exercise data, and it did so across hospitals it had never seen during training.

Dr. Nir Uriel of NewYork-Presbyterian, who led the clinical side of the research, described the potential directly. “If we can use this approach to identify many advanced heart failure patients who would not be identified otherwise, then this will change our clinical practice and significantly improve patient outcomes and quality of life,” he said.

The research team’s longer-term vision is integration: a model that runs quietly in the background of a hospital’s imaging system, generates a risk estimate whenever an echocardiogram is processed, and flags the result alongside the standard report. A clinician seeing an elevated prediction might then refer the patient for formal cardiopulmonary testing, accelerating a pathway to advanced care that currently takes months or never happens at all.

What this research ultimately points to is a reframing of where heart failure diagnosis can begin. The current system depends on specialized tests delivered at specialized centers to patients who are often already in crisis. This approach would move the starting point earlier and lower, into routine scans already being collected at every hospital, read by a model trained to find severity signals that human reviewers are not equipped to spot on their own.

For patients in smaller hospitals, rural settings, or places without exercise testing infrastructure, that shift could mean the difference between receiving advanced treatment and never being identified as needing it.

The work still requires prospective clinical trials to validate performance in real-world deployment, but the foundation built here is solid enough to warrant that next step.

Research findings are available online in the journal npj Digital Medicine.

The original story “New AI model can detect advanced heart failure before it’s too late” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post New AI model can detect advanced heart failure before it’s too late appeared first on The Brighter Side of News.