A robot dog that talks back may sound like a novelty. In this case, it is meant to solve a practical problem: guide dogs can lead, but they cannot explain.

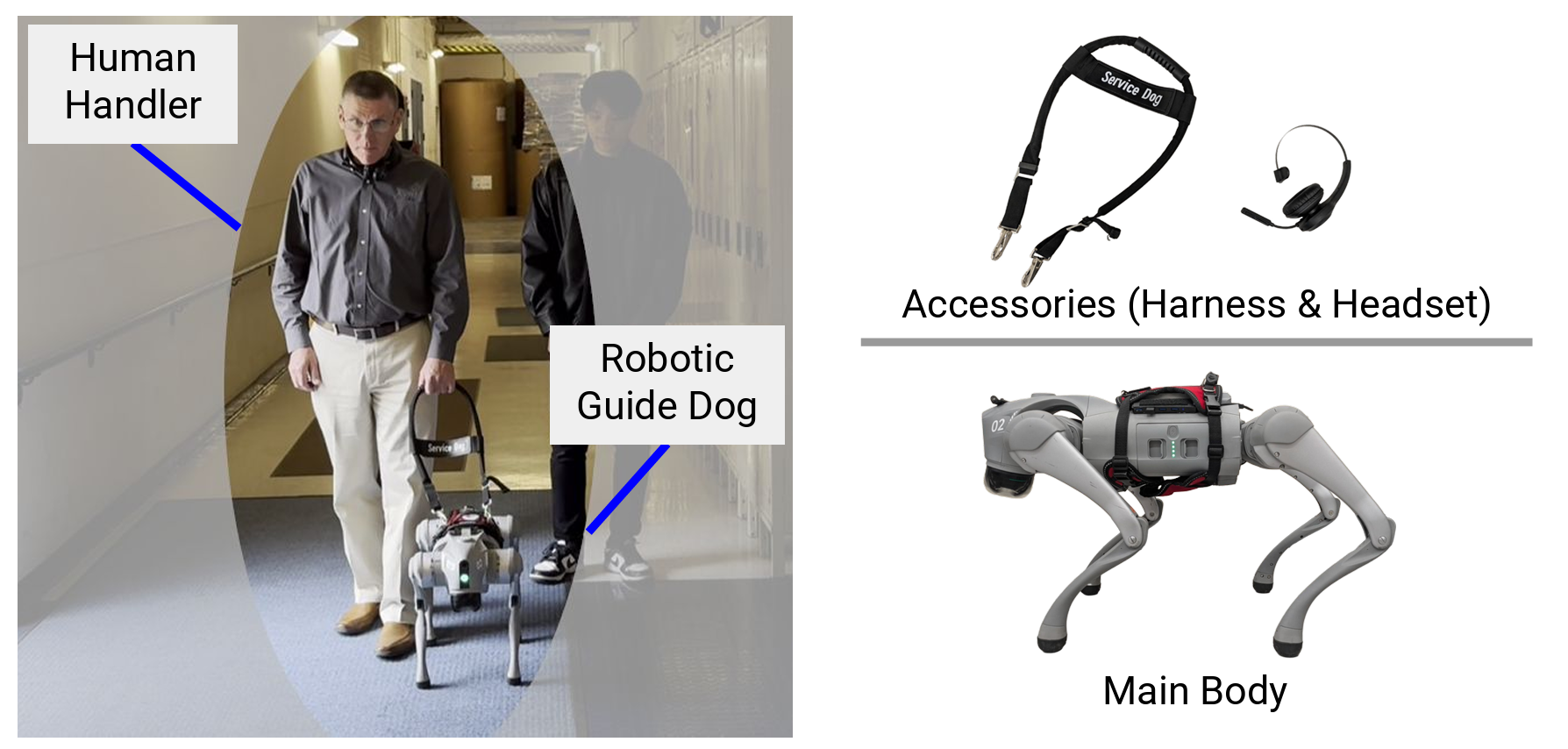

That gap is what researchers at Binghamton University and State University of New York, set out to address with a robotic guide dog system that uses a large language model to hold spoken conversations with visually impaired users. The machine can suggest routes, explain trade-offs before a trip begins, and describe what is happening during the walk itself.

“For this work, we’re demonstrating an aspect of the robotic guide dog that is more advanced than biological guide dogs,” said Shiqi Zhang, an associate professor at the Thomas J. Watson College of Engineering and Applied Science’s School of Computing. “Real dogs can understand around 20 commands at best. But for robotic guide dogs, you can just put GPT-4 with voice commands. Then it has very strong language capabilities.”

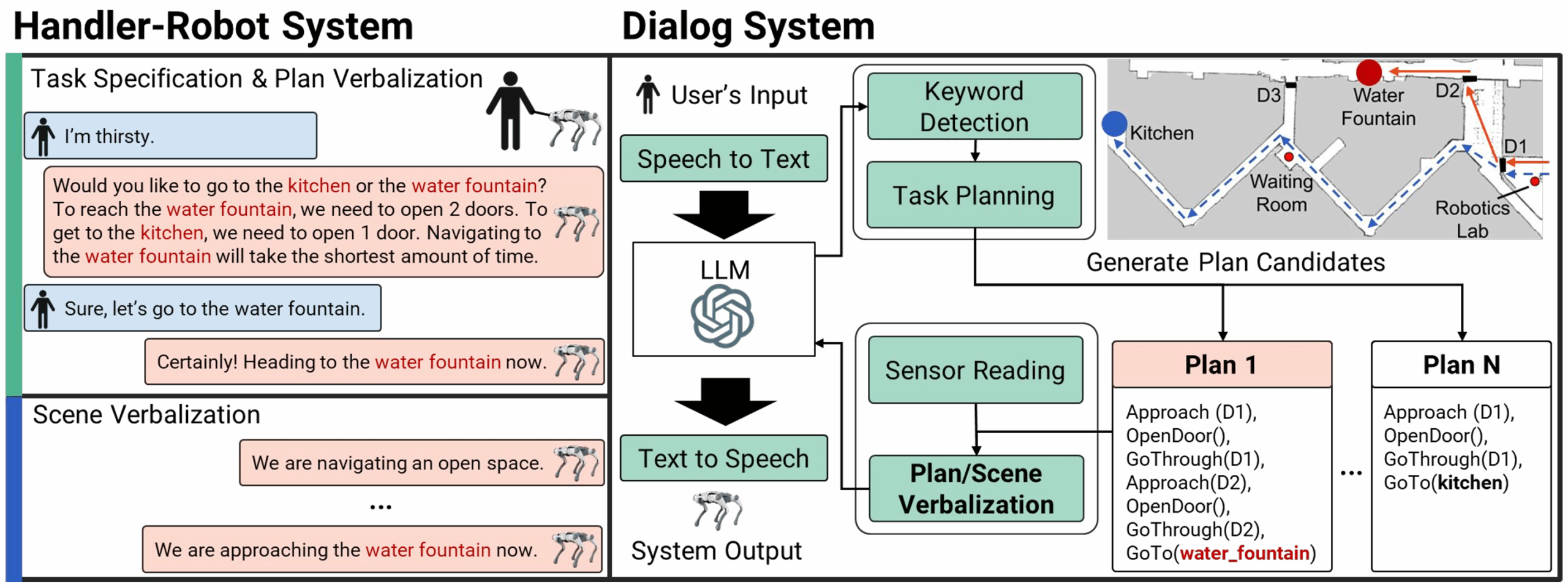

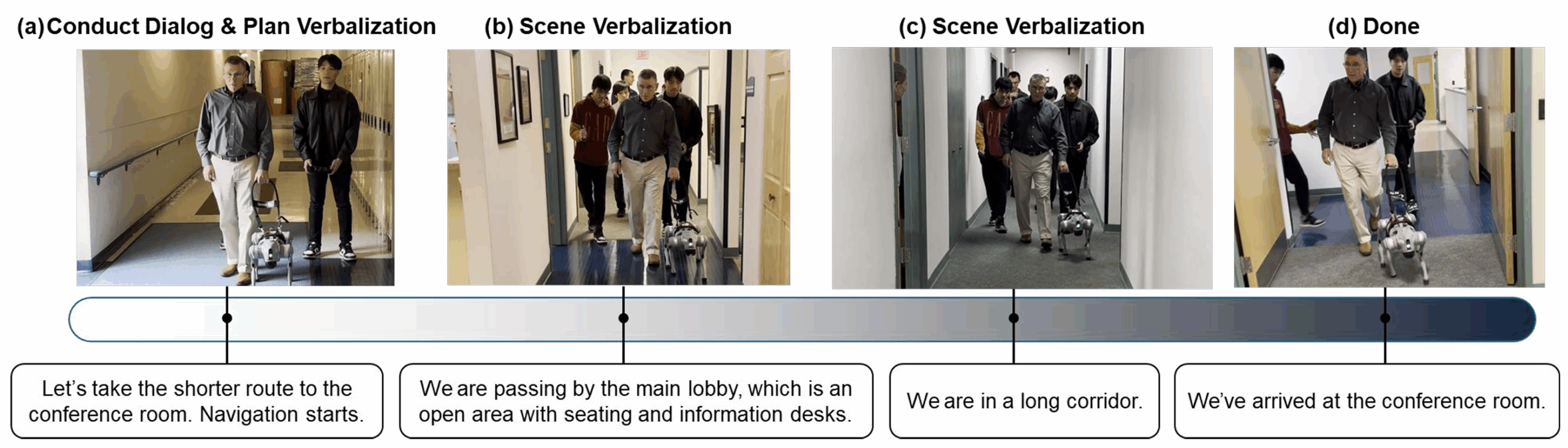

The project builds on earlier work from Zhang’s team, which trained robotic guide dogs to respond to leash tugs. This version adds spoken dialogue. A user can state a need, hear options, choose among routes, and receive updates along the way. The researchers call those two features “plan verbalization” before navigation and “scene verbalization” during it.

“This is very important for visually impaired or blind people, because situational and scene awareness is relatively limited without vision,” Zhang said.

Guide dogs have long been valued for helping visually impaired people move more safely and independently. The paper notes that they can improve confidence, companionship, and mobility. But they are also expensive and time-consuming to produce. Breeding and training can take years, and guide dog training centers have graduation rates below 50%, according to the source material.

Even then, access remains limited. The paper says only about 2% of visually impaired people in the United States use guide dogs. In China, it cites about 400 guide dogs for more than 10 million visually impaired people.

There are also practical barriers. Some people cannot walk the miles a day needed to care for a dog. Others have allergies. So the researchers argue that robotic guide dogs could fill a gap, especially if they can do more than just pull someone around obstacles.

That is part of the distinction here. Traditional guide dogs, and many robotic versions, help with movement. This system is trying to support decision-making too.

A common request might not be a direct destination. Someone might say, “I am thirsty.” The robot is designed to interpret that kind of open-ended statement, identify valid nearby options, and then explain them in plain language. One destination might be faster. Another might require opening doors. The user can decide.

The system combines a large language model with a task planner. The language model manages conversation and interprets spoken requests. The planner handles navigation logic, generating action sequences and calculating factors such as travel cost and the number of doors that need to be opened.

That planning layer matters because, as the paper notes, large language models often struggle with spatial reasoning. So instead of relying on the model alone, the researchers used a symbolic planner to compute a route and feed that information back into the robot’s spoken response.

Once a route is chosen, the robot begins guiding the user and continues offering updates when the environment changes or when there has been a long silence. A hallway, an office door, a kitchen, a transition into a new area, all can trigger spoken descriptions meant to improve spatial awareness.

The researchers say that is important because visually impaired handlers do not simply follow passively. They make navigation decisions as part of a team, while the guide dog helps with obstacle avoidance.

The current setup also depends on several important assumptions. The robot must already have a map of the environment, including labels for places such as kitchens and conference rooms. It also assumes stable locomotion control, path planning software, and prior knowledge of the indoor space. The paper does not address situations where a human and robot explore a new environment together, where the robot falls and needs help recovering, or where it disobeys commands.

To see how the system worked with real users, the team recruited seven legally blind individuals between the ages of 40 and 68. One participant later said they did not meet the vision requirement and was excluded from the final analysis. Two participants had prior guide dog experience.

The study took place in a large office environment with many rooms. Participants asked to go to a conference room, and the robot guided them under three conditions: minimal verbalization, scene verbalization only, and the full version combining scene and plan verbalization.

For safety, the robot’s movements were controlled by an expert operator in what researchers call a Wizard of Oz setup. In other words, the system was not acting with full autonomy during the participant study.

After each trial, participants completed a questionnaire on usefulness, helpfulness, safety, ease of communication, and whether they would prefer a real guide dog for the same task.

The combined system scored highest on interface utility, helpfulness compared with no tool, helpfulness compared with other tools, ease of communication, and preference relative to a real guide dog. On a 5-point scale, it received 4.83 for utility, 4.83 for helpfulness compared with no tools, 4.33 for helpfulness compared with other tools, 4.50 for ease of communication, and 3.67 on the question comparing it with a real guide dog.

Its safety score was slightly lower than the other conditions, at 3.83. The researchers said comments from participants suggested that unfamiliarity with walking alongside a robot may have shaped that rating.

“They were super excited about the technology, about the robots,” Zhang said. “They asked many questions and they really see the potential for the technology and hope to see this working.”

The team also ran simulation studies to test how well the dialogue system handled ambiguous requests, noisy speech input, and route choices.

In those simulations, the full multi-turn system reached 94.8% accuracy when resolving ambiguous service requests. When noise was added to mimic speech recognition errors, accuracy dropped by 5.2%, while a keyword-based system performed far worse. In another test, giving users plan information increased dialogue length but reduced navigation cost and lowered total task time.

Still, the paper is careful not to oversell what has been built. The human study was small. The robot was not fully autonomous in those trials. The work focused on indoor navigation in a mapped environment. And even with strong user interest, the safety ratings show that trust and comfort remain open questions.

The researchers say future work will involve more user studies, greater autonomy, and longer routes indoors and outdoors.

This work points toward a navigation aid that could offer something biological guide dogs and simpler assistive tools cannot: a running explanation.

For visually impaired users, that could mean more control over route choices, more awareness of surroundings, and another option in cases where a traditional guide dog is too costly or impractical to maintain.

The system is still early, but it suggests that assistive robots may become less like passive machines and more like travel partners that can explain where they are going, and why.

Research findings are available online in the journal arXiv.

The original story “Talking robot guide dog uses AI to describe the world as it leads” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post Talking robot guide dog uses AI to describe the world as it leads appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.