A page filled with abstract shapes can spark wildly different ideas depending on who is looking at it. For one person, a curve becomes a bird in flight. Another person sees it turn into something mechanical. For a generative AI system, that same shape may lead nowhere at all.

That contrast sits at the center of a new study examining whether artificial intelligence can truly be creative, or whether it only appears that way.

The research, led by scientists at the University of Barcelona and published in the journal Advanced Science, didn’t test whether AI could generate a convincing painting or mimic a famous style. It asked something harder: can AI produce genuinely original visual ideas on its own, without being told what to think?

The short answer is no. Not yet. Not even close.

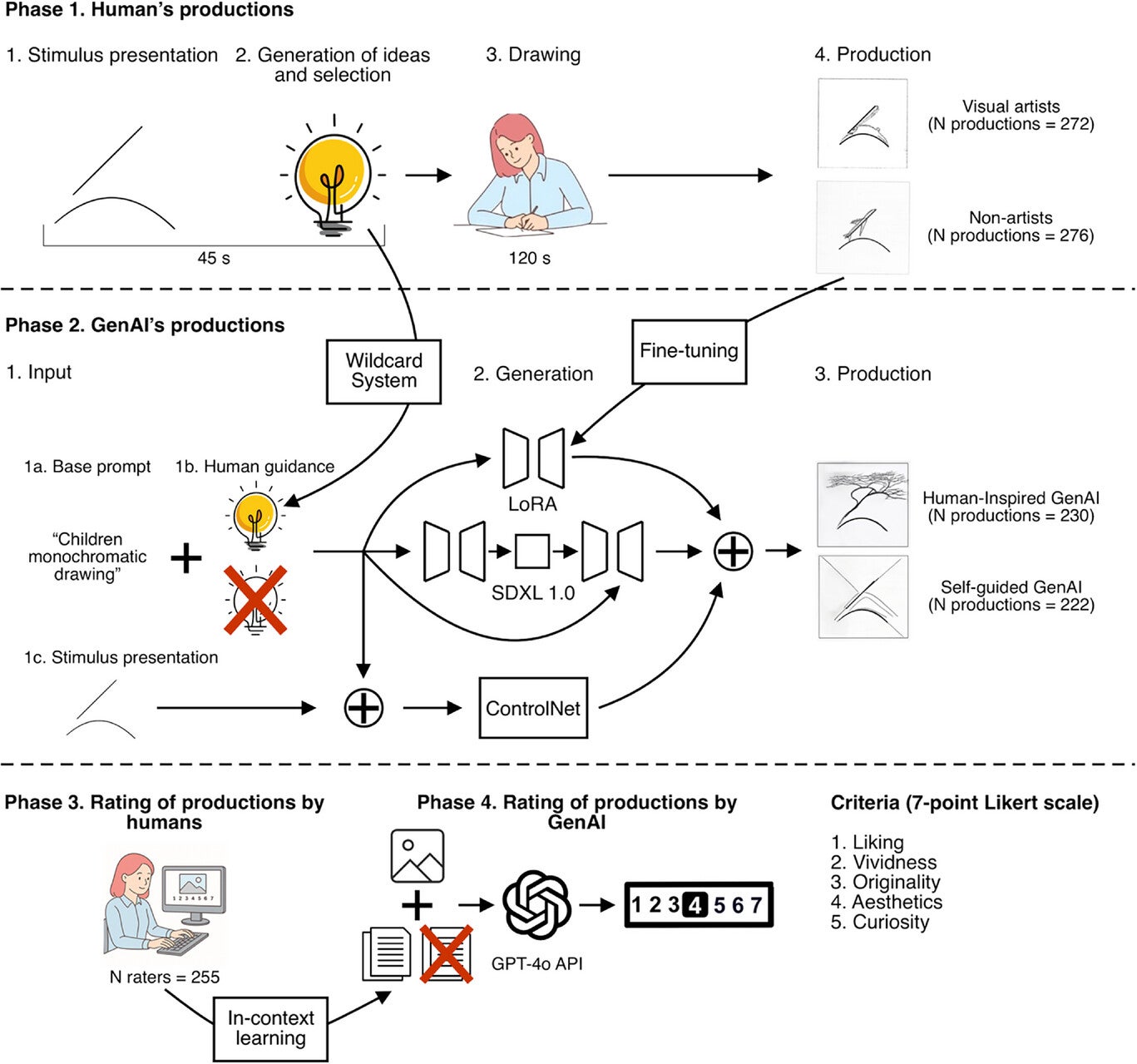

The team used a standardized tool called the Test of Creative Imagery Abilities, which presents participants with abstract shapes and asks them to mentally generate an image, describe it, and then draw it. It’s a task designed to measure imagination from the inside out, not just the quality of the finished product.

Two groups of humans took part: 27 visual artists and 26 non-artists. A Stable Diffusion image-generation model completed the same task under two conditions. In one, researchers gave it a prompt that included a real idea generated by one of the human participants during the task. In the other, the model received only a basic prompt with no human idea attached. The first condition is what the researchers called “human-guided.” The second is what they called “self-guided.”

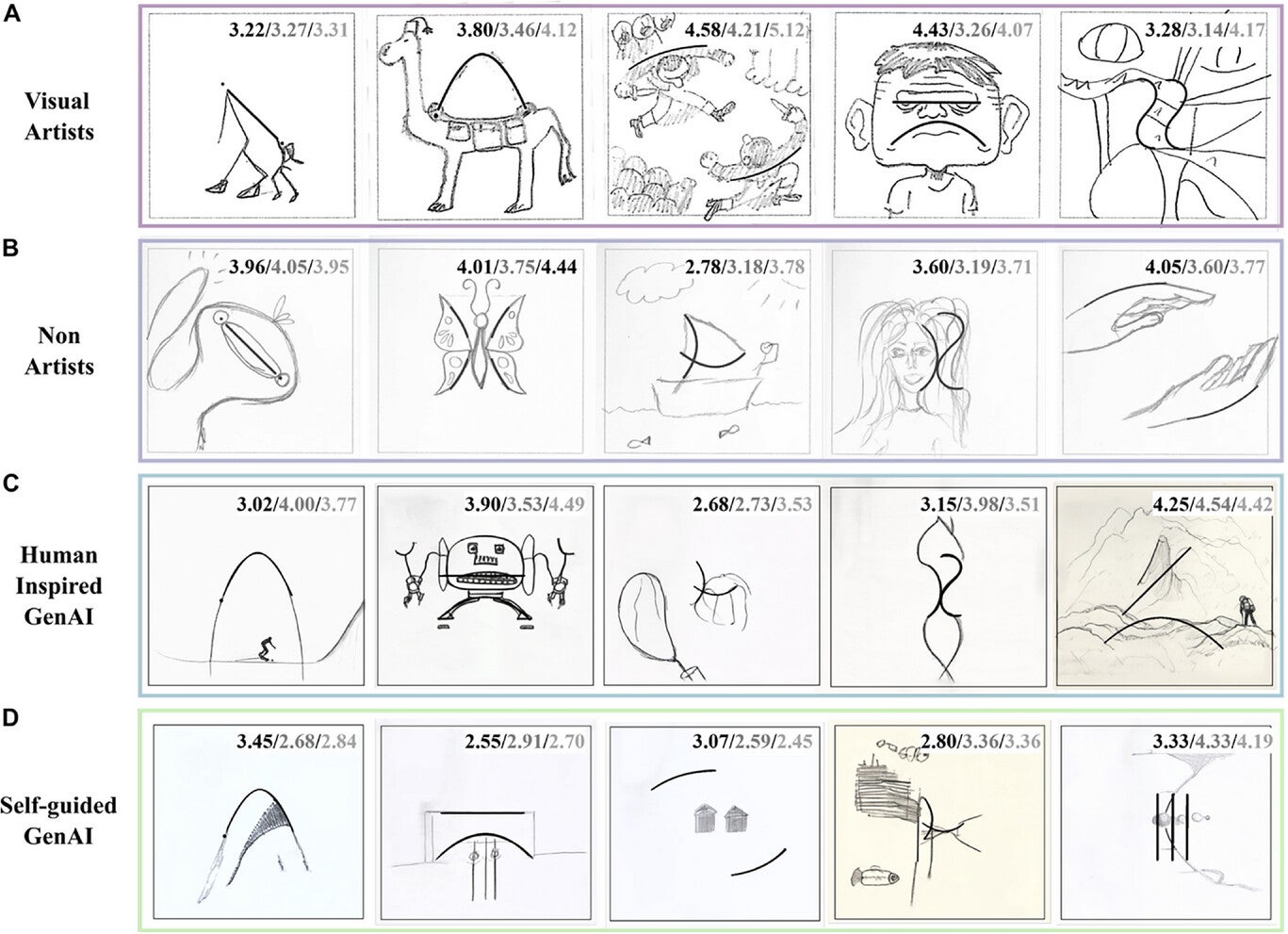

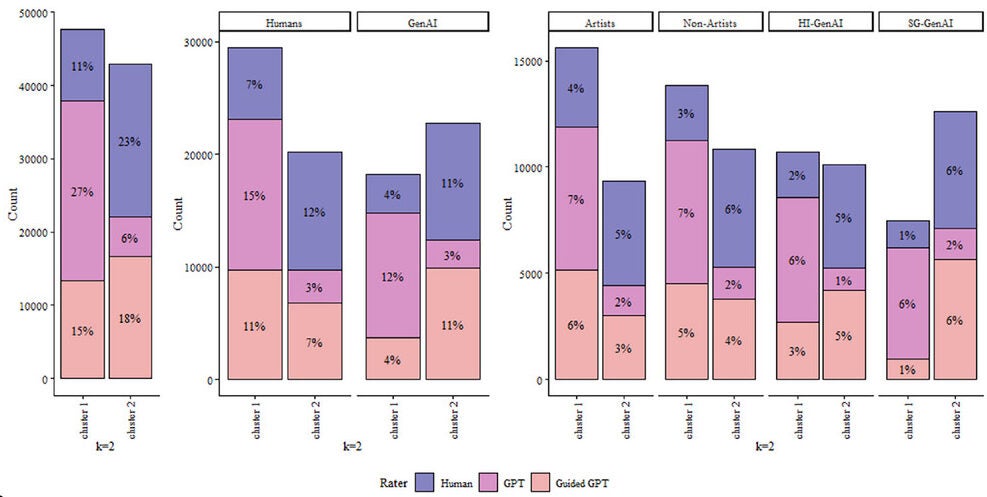

A panel of 255 human raters then evaluated all 1,000 generated images across five dimensions: liking, vividness, originality, aesthetics, and curiosity. The results were consistent across every measure. Visual artists ranked highest. Non-artists came next. The human-guided AI followed, scoring roughly on par with non-expert humans. The self-guided AI came last, and not by a narrow margin.

“Although the AI model was trained with the creative productions of human participants, it showed a poor performance in the production of creative images,” said Xim Cerdá-Company, a researcher at IDIBELL and the CVC-UAB and co-leader of the study. “In fact, it did even worse when it was deprived of human assistance.”

This matters because most prior research on AI creativity has leaned heavily on verbal tasks, asking language models to generate unusual uses for objects or complete open-ended prompts. Those tests tend to reward speed, volume, and the ability to combine distant concepts, areas where AI systems have a genuine computational advantage. The conclusion from that literature has been, broadly, that AI is already a creative force.

The Barcelona team’s visual task is structured differently. Abstract shapes carry no semantic content. They don’t suggest obvious interpretations. A human looking at a wavy line might connect it to something from memory, a coastline, a feeling, a half-remembered dream. The AI, given the same shape and a minimal prompt, had almost nothing to work with.

Professor Antoni Rodríguez-Fornells, co-leader of the study and head of the Cognition and Brain Plasticity research group at the University of Barcelona, put it directly: “Current generative AI models are still far from replicating independent creative processes.”

What the self-guided AI produced reflected that gap. Without a human idea in the prompt, the model’s outputs were rated as the least creative of any group. Adding a single concrete idea from a human participant pulled the model’s performance up to the level of an ordinary person with no artistic training. That’s a significant jump, and it reveals something important: the improvement wasn’t coming from the model. It was coming from the person who wrote the prompt.

The study also tested how well AI judges creativity. The same images were evaluated by GPT-4o under two conditions, one that simply mirrored the human rating task and one that provided reference examples of human ratings alongside the images.

Without those reference points, GPT-4o struggled to distinguish between categories. It rated AI-generated images at roughly the same level as human images, a pattern that diverged sharply from human judgments. Human raters were discriminating and consistent; GPT-4o was generous and scattered. When reference examples were added, the AI rater’s scores shifted closer to human patterns, but still with notably wider variance.

The dimensions where human and AI raters agreed most were the more perceptually direct ones: vividness, liking, aesthetics. The weakest agreement emerged around originality and curiosity, which are harder to pin down and more dependent on context, expectation, and cultural knowledge.

“Creativity must be studied as a process, not just focused on its results,” Cerdá-Company said.

The researchers are candid about limitations. The study used one class of image-generation model, Stable Diffusion, and not the newer multimodal systems that are currently generating the most public attention. Those models couldn’t be tested under the same controlled conditions.

The findings carry real weight for anyone building or deploying AI tools in creative contexts. The common assumption that AI can serve as an autonomous creative partner may be premature. What the study actually demonstrates is that AI performs well when given structured human input and poorly when that input is removed.

For designers, artists, marketers, or educators using these tools, that distinction matters. The quality of what AI produces is not a fixed property of the model. It scales directly with the quality and specificity of human involvement. The model isn’t an engine of ideas. It’s closer to a sophisticated executor of them.

That may still be useful. But it’s a different thing entirely.

Research findings are available online in the journal Advanced Science.

The original story “AI struggles with true creativity compared to humans, study finds” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post AI struggles with true creativity compared to humans, study finds appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.