Some scientific breakthroughs arrive with a bang. Others arrive twice.

That odd pattern sits at the center of a new study from researchers at Binghamton University and the University of Virginia, who built a way to track when research truly changes the direction of science. Their method does more than flag famous papers. It also picks up something that often confuses older citation-based measures: major discoveries made at nearly the same time by different people.

The study, published in Science Advances, comes from Sadamori Kojaku of Binghamton University, Munjung Kim, and Yong-Yeol Ahn. Together, they set out to measure what scholars often call “disruptiveness,” the degree to which a paper pulls a field away from its earlier path and pushes it somewhere new.

“Science doesn’t evolve incrementally, but sometimes we see abrupt changes. Scholars are interested in when and why exactly the disruption happens,” Kojaku said. “And to do that, we need to create a metric to kind of tell scholars, ‘OK, this is the disruption happening in a given year.’”

Their answer was a machine-learning system that mapped more than 55 million scientific papers and patents.

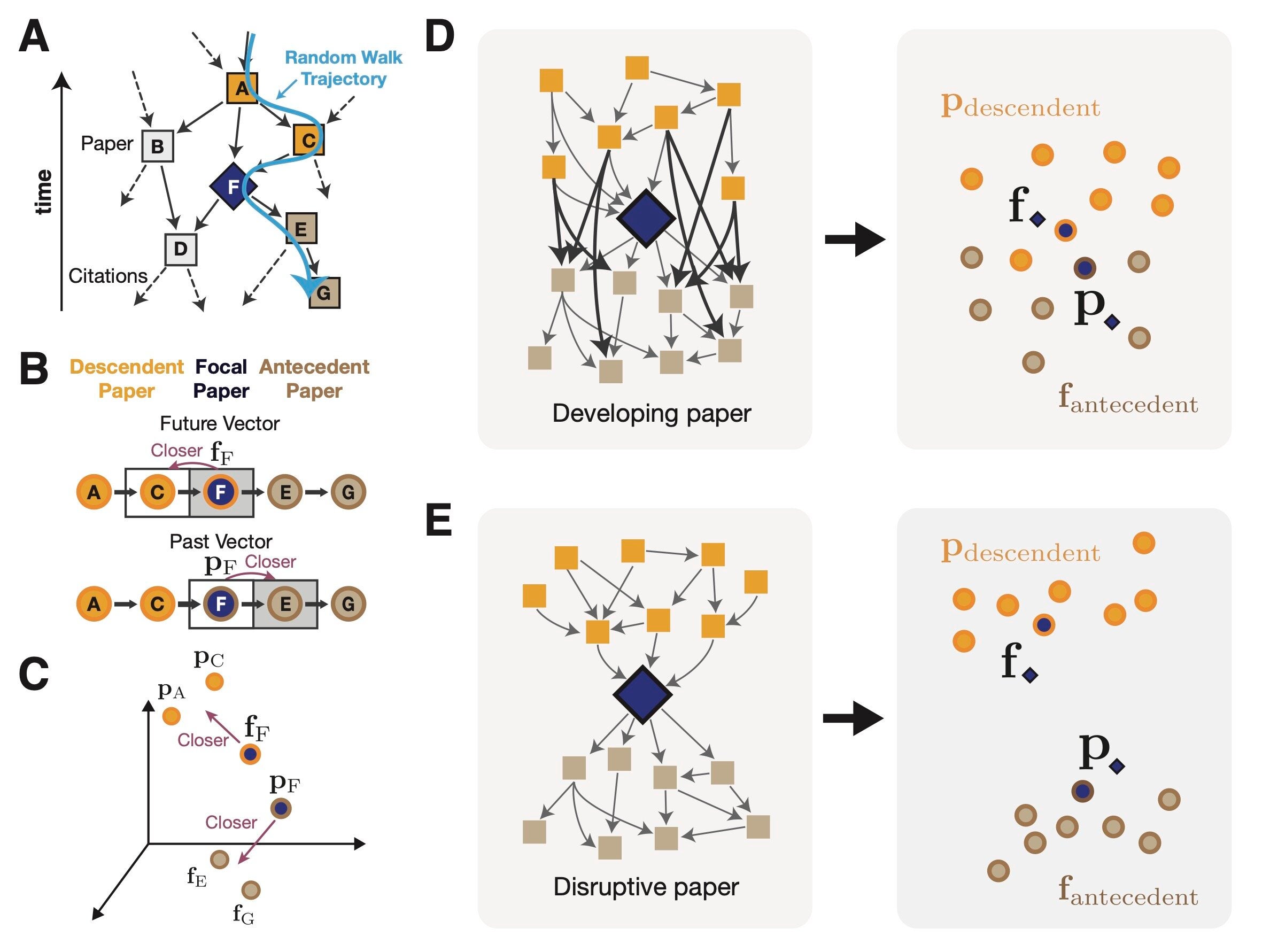

The idea behind the model is simple in concept, even if the math under it is not. Each paper gets two positions in a large research map. One reflects the work that came before it. The other reflects the work that followed it.

If those two positions sit close together, the paper mostly extends an existing line of research. If they land far apart, the paper may have redirected the field.

That distance became the team’s new metric, called the Embedding Disruptiveness Measure, or EDM.

The researchers argue that this broader map captures more than the citation links closest to a paper. That matters because older disruption measures can be overly local. A single citation can sometimes distort the score, especially when two papers report the same important idea around the same time.

The new measure also produced a smoother spread of scores across the scientific record. By contrast, the commonly used disruption index often clumped near zero, which the authors describe as a kind of degeneracy that limits its resolution.

To test the method, the team compared it against sets of landmark papers. They looked at 302 Nobel Prize-winning papers in the Web of Science dataset and 278 milestone papers selected by the American Physical Society.

Both measures often rated these papers as disruptive, but the older index showed an awkward split. Some landmark papers scored as highly disruptive, while others looked barely disruptive at all.

That turned out not to be random.

Many of the low-scoring cases involved simultaneous discoveries, where separate papers reached the same breakthrough independently, or where one discovery was spread across multiple papers. In those cases, mutual citations between related papers could drag the older disruption score sharply downward.

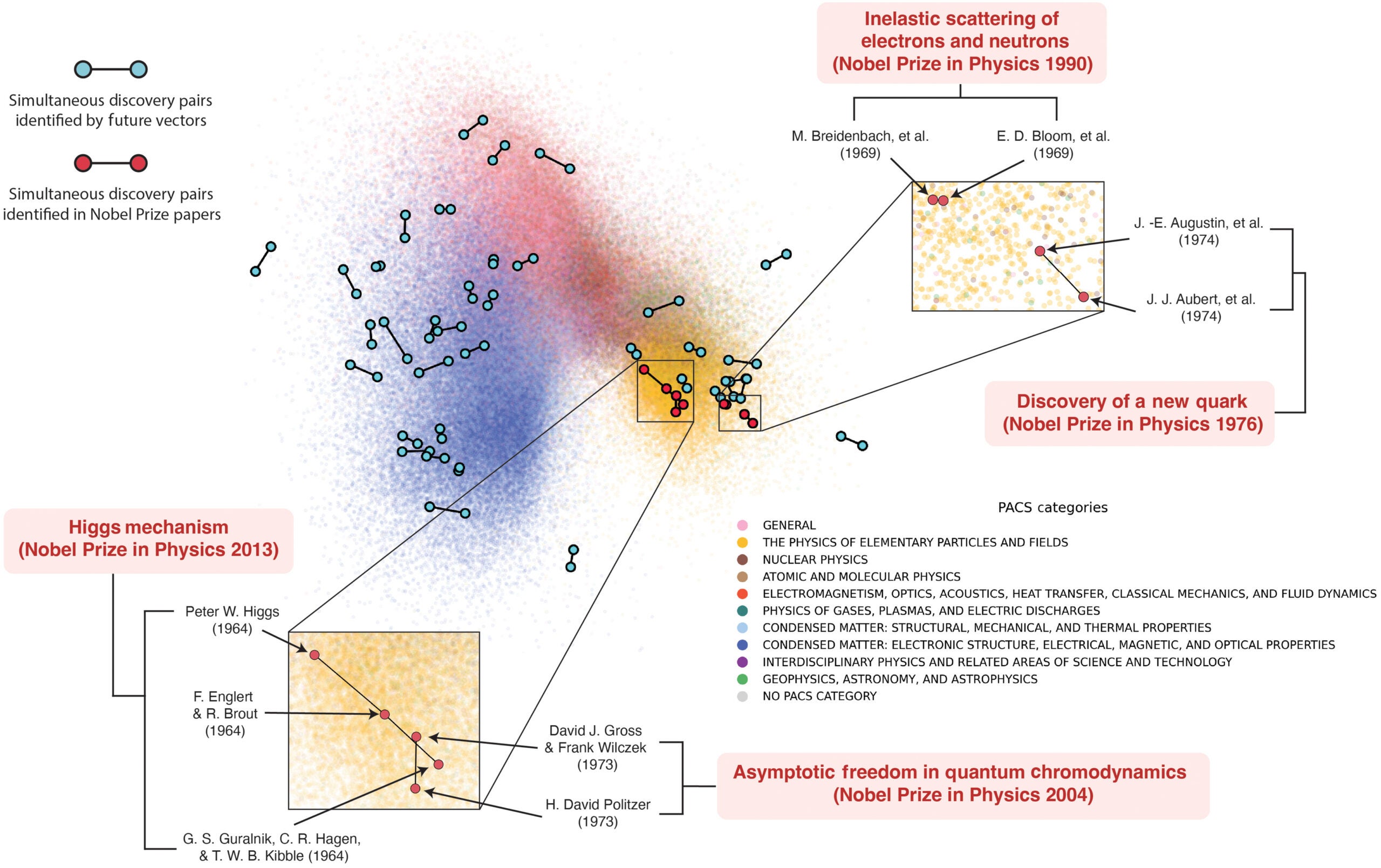

The researchers point to well-known examples. One was the 1974 discovery of the J/ψ meson, announced by teams led by B. Richter and S. Ting. Another was the Higgs mechanism, developed independently by F. Englert and R. Brout, and by P. Higgs. Both papers appeared in the same issue of Physical Review Letters and cited each other.

That distinction showed up in the statistics, too. In logistic regression tests, EDM had a stronger association with milestone papers and Nobel-winning papers than the older disruption index did. A 10% increase in EDM percentile raised the odds of a paper being a milestone paper by 1.23 and a Nobel Prize-winning paper by 1.34, according to the study.

The same system also helped the team search for simultaneous discoveries directly.

Their reasoning was that if two papers describe the same breakthrough, later researchers may use them in similar ways. That would place their “future” vectors close together in the embedding space, even if one paper was cited more often than the other.

Using that logic, the team examined pairs of papers in the American Physical Society dataset that were published in the same year and had very similar future vectors. They identified 18,417 possible pairs. Among 80 high-citation papers they manually checked, 64 were judged to be simultaneous discoveries.

Of those, 34 were independent simultaneous discoveries, while 30 involved the same authors splitting related findings across multiple publications.

That does not mean the method catches every case. The authors note that citation-based tools cannot reliably find overlooked discoveries if they received little recognition in the first place.

They also warn that disruptiveness is not a single, neat category. Some papers may look disruptive under one definition and developmental under another. Citation patterns can also reflect status, field norms, and other social forces, not just intellectual change.

Their method has further limits. It is computationally demanding to track how a paper’s disruptiveness changes over time, less effective for papers with few citations or references, and not always easy to interpret compared with simpler network measures. It may also miss parallel discoveries that arose in separate scholarly communities with little contact.

Still, the study suggests that science can be mapped in a way that sees more than famous names and citation counts. It can also see the strange moments when a field turns because several people, working apart, reached the same idea at nearly the same time.

Research findings are available online in the journal Science Advances.

The original story “Scientists find a new way to detect scientific breakthroughs” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post Scientists find a new way to detect scientific breakthroughs appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.