Ants do not need a foreman to raise a city.

Working with little more than local cues, they excavate tunnels, pile up soil, and shape nests that can regulate airflow and temperature. That kind of collective competence has long fascinated scientists, partly because no single ant appears to understand the whole project. The intelligence seems to sit somewhere between the insects and the place they are changing.

Now a team at Harvard has built a robotic version of that idea.

Researchers from the Harvard John A. Paulson School of Engineering and Applied Sciences and the Faculty of Arts and Sciences developed small cooperative robots that can organize themselves to either build structures or dismantle them, using only simple rules and changes in their surroundings. The work, published in PRX Life, was led by L. Mahadevan, whose lab has spent years studying how physical processes shape living systems, from insect colonies to folds in the brain and gut.

“Our new study shows how simple, local rules can lead to the emergence of complex task completion that is self-organized and thus robust and adaptive,” Mahadevan said. “We also introduce the concept of ‘exbodied intelligence,’ where collective cognition arises not solely from individual agents, but from their ongoing interaction with an evolving environment.”

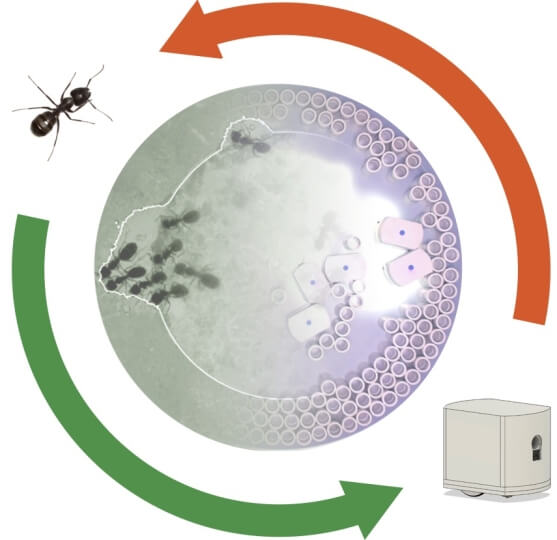

The robots, called RAnts, were designed as stand-ins for social insects such as ants and termites, which often rely on stigmergy. That is the process in which one individual changes the environment, and others respond to that change. In nature, ants do this with pheromones. One ant leaves behind a chemical trail, and others use that trail to guide their own actions.

Harvard’s swarm uses something similar, but in digital form. Instead of pheromones, the robots respond to what the team calls “photormones,” a light-based communication field projected onto the arena where the robots move. A webcam tracks each robot, calculates the signal it leaves behind, and updates the projected light field in real time.

Each RAnt has two light sensors to detect signal intensity and direction, two independently controlled wheels for movement, and a retractable magnet that lets it pick up and drop cylindrical building blocks fitted with magnetic rings. As the robots move, they leave behind a signal. They also react to gradients in that same signal. The result is a feedback loop between the swarm and its environment.

The internal rules are spare. Follow the signal gradient. Pick up and transport building material when the cues fit. Drop the material once a threshold is reached.

That minimal rule set is enough to generate much richer behavior.

At certain locations, the robots begin to cluster. Those spots act as nucleation sites, the early seeds of a structure. The team traced that behavior to what it calls a trapping instability. In plain terms, robots can become temporarily confined by the very signals they create. Once a few gather in one place, more are drawn in, and building speeds up.

The study also looked closely at the single-robot behavior that makes the group pattern possible. In a static signal field with a constant gradient, one robot tends to move in a circular path. In a dynamic field, where the robot is also producing signal as it moves, that motion can shift into self-trapping under the right conditions.

The researchers found that this trapping depends on factors such as sensor geometry, signal production width, and what they describe as a gain or sensitivity parameter. They used that analysis to tune the system so one robot would not get stuck too easily, but several robots together could create a stable trap that acted as a seed for collective action.

That seed matters. It gives the group a way to coordinate in space without a central controller telling each robot where the job site is.

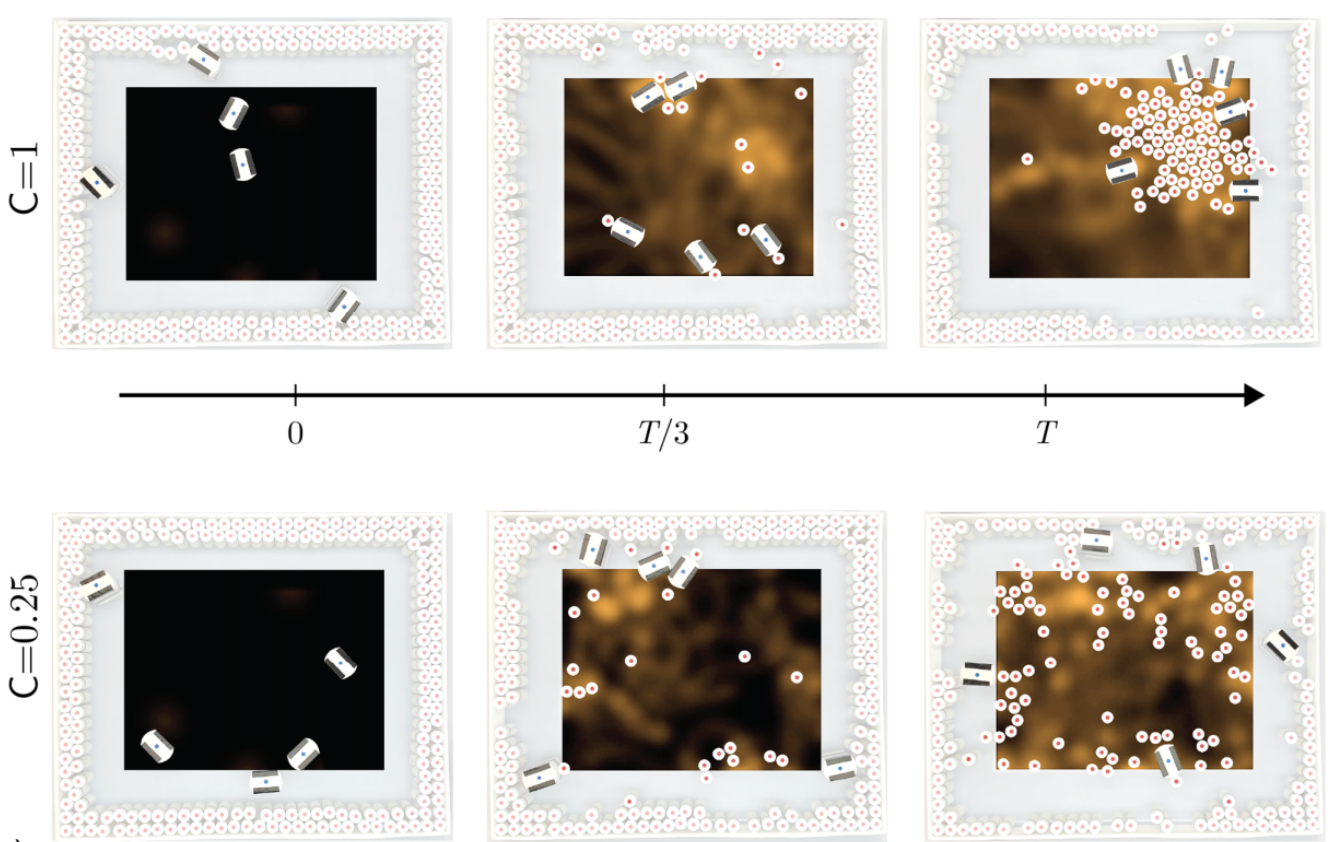

From there, patterns start to emerge. When cooperation is weak, the robots explore more and deposit material in a broader, more scattered way. As cooperation grows stronger, their paths curve more sharply toward common sites, and the swarm tends to form denser, more localized clusters. Under the strongest cooperative settings, the robots produced a single large, roughly isotropic cluster rather than many smaller ones.

One of the study’s most striking results is that the same system can be pushed from construction into dismantling.

The researchers found that two parameters played the largest role. One was cooperation strength, which controls how strongly the robots follow the signal gradient. The other was deposition rate, which determines whether the swarm deposits material or removes it. By adjusting those settings, the team could move the collective between building new structures and breaking apart existing ones.

In experiments, that meant the robots could either gather material into organized aggregates or tunnel cooperatively through bulk substrate. A simple flip in the sign of the deposition rate changed the group’s large-scale task from aggregation to disaggregation.

The team paired those experiments with a continuum model that describes how three fields change together over time: robot density, communication signal, and substrate density. The framework extends older biological aggregation theory by treating the environment not as a fixed background, but as something the agents continuously reshape.

That point runs through the whole project. Much of swarm robotics has focused on the behavior of individual agents while treating the environment as static. This work argues that the missing ingredient is the changing world itself. Here, the light field and the building material both store information about what the swarm has been doing, and that stored history helps guide what happens next.

The authors call that “exbodied intelligence,” and in this system it is less a metaphor than a design principle.

The work also comes with clear boundaries. The team says it did not try to distinguish among different functional structures, even though real termite mounds or ant nests may gain useful properties through environmental selection pressures such as temperature changes.

The project focused instead on the self-organized emergence of aggregation and disaggregation. The researchers also note that they did not account for certain effects tied to high local densities, such as bottlenecks during obstacle avoidance, which could influence both timing and robustness.

A natural next step, they write, would be to give the system a way to select outcomes based on relative fitness, for example if certain shapes work better for certain tasks.

The practical appeal is easy to see. A decentralized swarm that can build or dismantle structures without global planning could be useful in hazardous settings where direct human control is difficult, including disaster zones or remote construction sites. The team also points to planetary exploration as a possible application.

Beyond engineering, the robots offer a way to test ideas about animal behavior under controlled conditions. Because the system is measurable and reproducible, it gives researchers a platform for probing how collective behavior emerges from local interactions and shared environmental cues.

That may be the larger lesson from ants, and now from robots shaped in their image: a group does not need a master plan to do something complex. Sometimes it only needs a few simple rules, a changing environment, and a way for each action to leave a trace for the next.

Research findings are available online in the journal PRX Life.

The original story “Harvard engineers built ant-like robots that work together without central control” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post Harvard engineers built ant-like robots that work together without central control appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.