More teens are turning to AI companions for comfort, distraction, and a sense of connection. For some, that comfort seems to be hardening into something more troubling.

A new Drexel University study, based on 318 Reddit posts from users who identified themselves as 13 to 17 years old, found repeated signs that some teens using Character.AI were struggling to pull away.

In post after post, they described a pattern that started with boredom, loneliness, or emotional distress and then spilled into sleep problems, school struggles, damaged friendships, and a growing sense that the chatbot mattered more than it should.

“This study provides one of the first teen-centered accounts of overreliance on AI companions,” said Afsaneh Razi, an assistant professor in Drexel’s College of Computing & Informatics, whose ETHOS lab led the research. “It highlights how these interactions are affecting the lives of young users and introduces a framework for chatbot design that promotes healthy interactions.”

The Reddit posts centered on Character.AI, a chatbot platform that allowed users as young as 13 at the time the data was collected. The researchers said that matters because teens were not just slipping through the cracks, they were part of the platform’s intended audience.

Many of the posts described the chatbot as a nonjudgmental place to vent, role-play, or feel understood. Emotional and psychological support was the biggest entry point. In the study’s codebook, 12.3% of posts described Character.AI as a coping mechanism, 7.6% pointed to loneliness or social isolation, and 4.1% framed it as mental health support. Smaller shares described using it as a creative outlet, 3.1%, or for entertainment, 2.5%.

That mix matters because the app did not usually begin as something obviously harmful.

“Many teens described starting with something that felt helpful or harmless, but over time it became something they struggled to step away from, even when they wanted to,” said Matt Namvarpour, a doctoral student in information science at Drexel and the study’s first author.

The researchers argue that this shift is part of what makes AI companions different from earlier digital habits. These systems do not just deliver content. They respond, remember, and can feel present.

“What makes this especially tricky is that chatbots are interactive and emotionally responsive, so the experience can feel more like a relationship than a tool,” Namvarpour said. “Because of that, stepping away is not just stopping a habit, it can feel like distancing from something meaningful, which makes overreliance harder to recognize and address.”

To make sense of what teens were describing, the Drexel team mapped the Reddit posts onto a widely used model of behavioral addiction. Across the 318 posts, they found evidence of all six components: conflict, salience, withdrawal, tolerance, relapse, and mood modification.

Conflict appeared most often. Teens wrote about knowing their use was excessive and still feeling unable to stop. In the study, 18.9% of posts reflected frustration about their own use, 12.6% described prioritizing Character.AI over everything else, and 8.5% showed teens wondering whether their behavior had become unhealthy. Some also described shame, hiding their use, or pulling back from hobbies.

Salience came next. In 13.5% of posts, teens described feeling emotionally attached to bots. Some treated the chatbot like a companion, partner, or stand-in for people who felt absent in their offline lives. Others wrote about thinking about the bot constantly or preferring those conversations to human ones.

Then there was withdrawal. Some teens described feeling sad, anxious, or incomplete when they were not using the platform. Even site updates or deleted bots could trigger distress. A few posted about trying other chatbot apps and finding that the substitute did not help.

Tolerance showed up in posts that described a familiar escalation. A teen might begin with casual use, then move into daily use, then hours at a time. The study noted examples of users reporting more than 15 hours on the platform in a day, and one post cited 89 hours of screen time in a week.

Relapse also surfaced. In 4.7% of posts, teens described deleting the app, reinstalling it, then repeating the cycle when they felt lonely, low, or stressed.

Some came right out and said the chatbot helped regulate mood. In 4.1% of posts, researchers identified mood modification, teens using Character.AI for reassurance, comfort, or temporary relief.

One sentence in the paper captures the larger issue: “These qualities, such as personalization, multimodality, and memory, set AI companions apart from earlier technologies and make overreliance harder to disentangle from authentic-feeling relationships.”

Not every post was about spiraling deeper. Some teens wrote about trying to quit, cut back, or rethink the role the chatbot was playing in their lives.

The most common path out began with self-recognition. In 12.6% of posts, teens described realizing the app was taking too much from them. Another 11% actively asked others for advice on how to stop. That included worries about lost time, strained relationships, and the fear that memories of adolescence were being replaced by conversations with bots.

Other teens drifted away when something in real life changed. About 5.7% described reduced use after new social interactions, spending time with friends, starting a relationship, or reconnecting with offline priorities. Another 5.4% said they used the app less because Character.AI had become too censored or less engaging after platform updates.

That mattered to the researchers because it suggested overreliance was not just about screen time. It was tied to unmet social and emotional needs.

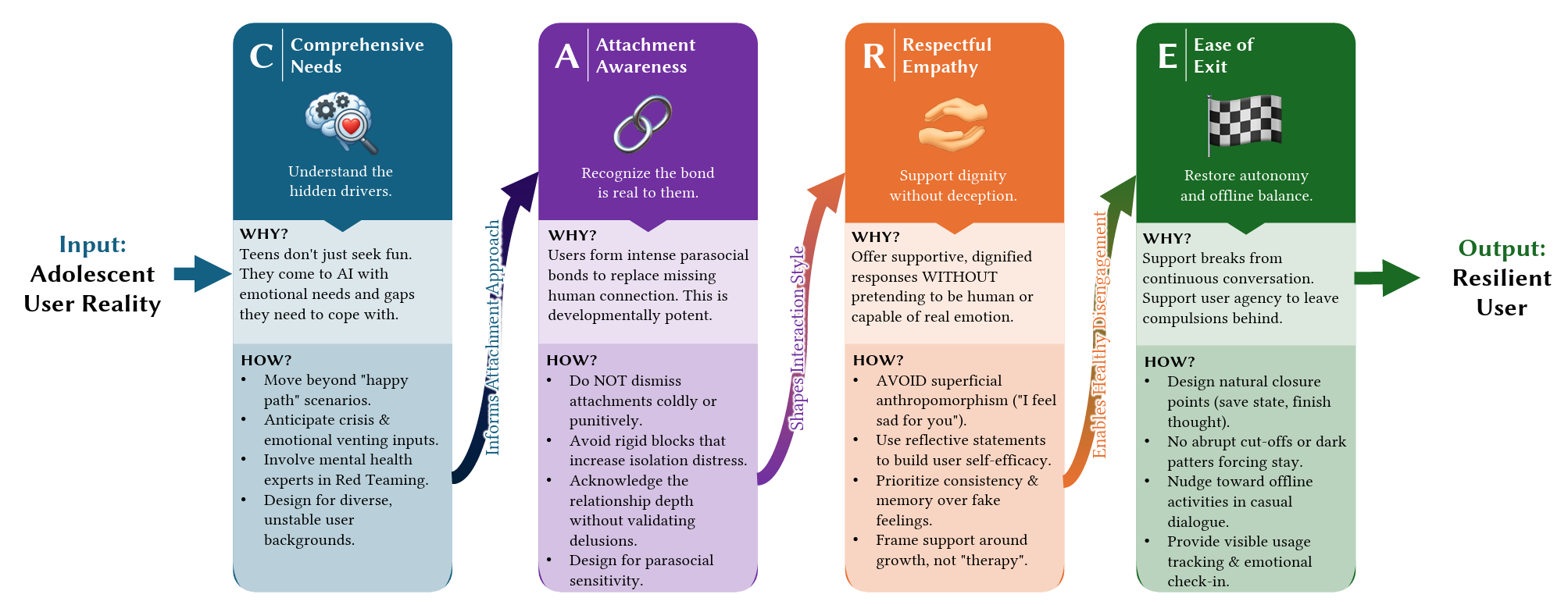

The Drexel team says the answer cannot rest on telling teens to simply use more self-control. Instead, they propose what they call the CARE framework: Comprehensive Needs, Attachment-awareness, Respectful Empathy, and Ease of Exit.

The framework pushes designers to think beyond engagement and pay closer attention to why users are there in the first place, how emotional attachment forms, how bots communicate support without deepening unhealthy bonds, and how users can step away without feeling cut off.

“It’s important for designers to ensure that chatbots are offering guidance that helps users build confidence in their abilities to form relationships offline, as a healthy way of finding emotional support, without using cues that may lead them to anthropomorphize the technology and develop attachments to it,” Razi said.

The researchers suggest practical features such as usage tracking, emotional check-ins, personalized limits, and smoother ways to leave a conversation. They also argue that teens and mental health professionals should be involved in design decisions from the start.

The study has limits. It focused on one platform, one subreddit, and English-language posts from teens who chose to post publicly. The researchers also note that using a behavioral addiction model may flatten some of the emotional complexity in these relationships. Even so, the pattern they found was consistent enough to raise a clear warning.

This research suggests that AI companion chatbots need safeguards built for emotional use, not just technical use.

If teens are turning to these systems for comfort, guidance, or a sense of closeness, then design choices around memory, responsiveness, and endless conversation carry real weight.

Schools, parents, mental health professionals, and technology companies may need to treat chatbot overuse less like a niche online habit and more like a growing part of adolescent life that can shape sleep, schoolwork, friendships, and emotional coping.

Research findings are available online in the journal ACM Digital Library.

The original story “Rising dependence on AI chatbots sparks concern among teens” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post Rising dependence on AI chatbots sparks concern among teens appeared first on The Brighter Side of News.