A plane ticket jumps in price after a second search. A streaming service offers one customer a deal that never appears for someone else. An online store quietly adjusts what you pay, not because the product changed. Instead, the system thinks it knows how much you are willing to spend.

That quiet shift sits at the center of a growing legal and economic debate over algorithmic pricing. Companies now use algorithms, including AI-powered systems, to sort through detailed personal and behavioral data. They make pricing decisions at remarkable speed. The practice has sparked concern for two main reasons: whether algorithms can help firms collude, and whether they can charge different people different prices for the same product. The second issue, often called algorithmic price discrimination, has drawn less attention. Even so, it may create a different kind of consumer harm.

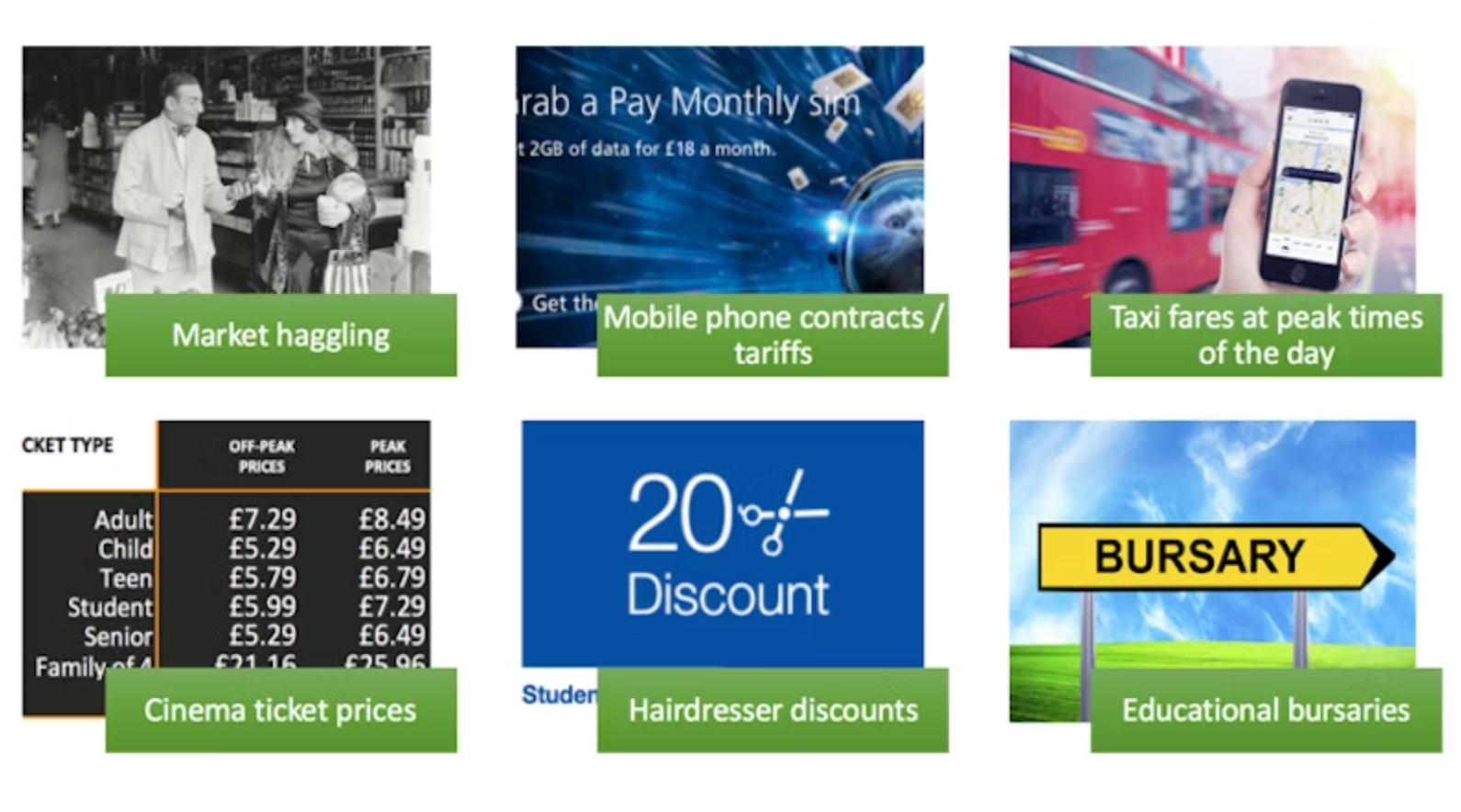

Price discrimination itself is not new. Businesses have long charged different prices to different groups, often using visible categories like age or location. Student discounts and region-based pricing are familiar examples. In economic theory, this can make sense. Lower prices for some buyers can expand sales, help firms recover high fixed costs, and sometimes increase output.

What has changed is the level of precision.

Digital markets allow firms to move far beyond old-fashioned segmentation. Online platforms can collect and analyze data such as age, gender, education, browsing history, location, login activity, transaction records, and social media behavior. Using that information, algorithms can build detailed consumer profiles. They can also estimate how sensitive a person may be to price.

That makes it possible to tailor prices in near real time. One buyer may see a higher price because the system reads repeated visits to a page as a sign of urgency. Another may get a lower price because the platform predicts that person will leave unless given a discount. So, the same product can end up carrying different prices under the same market conditions.

This practice comes closest to what economists call first-degree price discrimination. In this model, a seller charges each customer the maximum that customer is willing to pay. For years, that idea was treated more as theory than reality. That was because it required too much information and depended on blocking resale between consumers. Digital markets have weakened both barriers.

Platforms now have better tools to gather user data and stronger ways to prevent arbitrage. Non-transferable accounts, digital rights management, account verification, geolocation controls, and region-locked access all make it harder for users to bypass personalized pricing or resell access to others. In some markets, resale may be technically possible. However, it is still too difficult or commercially unattractive to matter.

Even so, the evidence is mixed on how far companies have gone. Research has shown that pricing algorithms can make highly targeted pricing possible, but strong proof of true one-to-one personalized pricing in real markets remains limited. In many cases, firms may still be operating closer to third-degree price discrimination. This means dividing users into segments rather than calculating a unique price for each individual.

Economists have long argued about whether price discrimination helps or harms welfare. In some situations, personalized pricing can increase output and improve efficiency. Simulation work cited in this debate suggests that more targeted pricing can raise profits and sometimes broaden market coverage. In competitive settings, it may even intensify rivalry and lower average prices.

But efficiency does not settle the question.

The key concern raised by algorithmic price discrimination is not always that average prices go up. Sometimes they may not. The deeper problem is that people often judge prices not only by amount, but by whether the process behind them feels legitimate.

Consumers tend to accept price differences when the reasons are visible and understandable. A student discount makes intuitive sense. Peak-hour pricing can seem reasonable if demand is obviously higher. Insurance pricing based on transparent risk factors may also be accepted, at least in some sectors.

Personalized algorithmic pricing is different. The system may rely on invisible inferences pulled from personal data, and consumers usually cannot tell why they were charged more than someone else. Consequently, that opacity changes the experience of the transaction.

Studies discussed in this legal analysis point in the same direction: consumers react negatively when they think prices were set through hidden personal profiling. They are especially likely to see the practice as unfair when they learn that others paid less for no clear reason, or when they suspect personal data played a role. That reaction can weaken trust, alter future buying decisions, and reduce the value consumers feel they received. This can happen even when traditional economic measures would not count the transaction as harmful.

One of the best-known examples came in 2000, when Amazon experimented with charging different prices for the same DVDs based on customers’ purchasing behavior. When customers found out, the backlash was swift. The episode became an early warning about how badly non-transparent pricing can damage trust.

That tension between technical efficiency and perceived unfairness matters because existing competition law is not neatly designed for it.

Under European Union law, Article 102 of the Treaty on the Functioning of the European Union bans abuse by dominant firms. Some forms of price discrimination can already fall under that rule. But the usual legal framework has focused more on whether discrimination harms competitors than whether it unfairly exploits end users.

That creates a mismatch. Algorithmic price discrimination aimed at consumers may not distort downstream competition in the classic sense. Instead, the harm may lie in the pricing process itself, especially when a dominant company uses opaque data-driven methods that buyers cannot detect, understand, or challenge.

For that reason, the argument here is that Article 102(a), which bars unfair purchase or selling prices and other unfair trading conditions, may offer a better route than Article 102(c). The latter is more tied to discriminatory treatment that places trading partners at a competitive disadvantage.

The legal challenge is that EU case law on excessive or unfair pricing developed around a different model. Traditional cases usually asked whether a price was excessively high compared with cost or with benchmarks in other markets. Personalized pricing does not always look like that. It may leave the average market price unchanged. At the same time, it quietly charges some users more than others for identical goods or services.

In those cases, the issue may be less about whether the whole price level is inflated and more about whether the higher price can be justified at all. If a dominant firm cannot point to cost, quality, innovation, or some other legitimate value-based factor, then the lack of justification starts to matter. That is where unfairness moves from a vague moral concern to a possible legal one.

Research findings are available online in the Journal of Competition Law & Economics.

The original story “The hidden logic behind AI pricing and why it may be unfair” is published in The Brighter Side of News.

Like these kind of feel good stories? Get The Brighter Side of News’ newsletter.

The post The hidden logic behind AI pricing and why it may be unfair appeared first on The Brighter Side of News.

Leave a comment

You must be logged in to post a comment.